(TL;DR: I ve been trying out btrfs in some places instead of ext4, I ve hit absolutely zero issues and there are a few features that make me plan to use it more.)

Despite (or perhaps because of) working on storage products for a reasonable chunk of my career I have tended towards a conservative approach to my filesystems. By the time I came to Linux

ext2 was well established, the move to

ext3 was a logical one (the joys of added journalling for faster recovery after unclean shutdowns) and for a long time my default stack has been

MD raid with

LVM2 on top and then

ext4 as the filesystem.

I ve dabbled with other filesystems; I ran

XFS for a while on my

VDR machine, and also when I had a large tradspool with

INN, but never really had a hard requirement for it. I ve ended up adminning a machine that had

JFS in the past, largely for historical reasons, but don t really remember any issues (vague recollections of NFS problems but that might just have been NFS being NFS).

However.

ZFS has gathered itself a significant fan base and that makes me wonder about what it can offer and whether I want that. Firstly, let s be clear that I m never going to run a primary filesystem that isn t part of the mainline kernel. So ZFS itself is out, because I run Linux. So what do I want that I can t get with ext4? Firstly, I d like data checksumming. As storage gets larger there s a bigger chance of silent data corruption and while I have backups of the important stuff that doesn t help if you don t know you need to use them. Secondly, these days I have machines running containers, VMs, or with lots of source checkouts with a reasonable amount of overlap in their data. Disk space has got cheaper, but I d still like to be able to do some sort of deduplication of common blocks.

So, I ve been trying out

btrfs. When I

installed my desktop I went with btrfs for

/ and

/home (I kept

/boot as ext4). The thought process was that this was a local machine (so easy access if it all went wrong) and I take regular backups (so if it all went wrong I could recover). That was a year and a half ago and it s been pretty dull; I mostly forget I m running btrfs instead of ext4. This is on a machine that tracks Debian testing, so currently on kernel 6.1 but originally installed with 5.10. So it seems modern btrfs is reasonably stable for a machine that isn t driven especially hard. Good start.

The fact I forget what filesystem I m running points to the fact that I m not actually doing anything special here. I get the advantage of data checksumming, but not much else. 2 things spring to mind. Firstly, I don t do snapshots. Given I run testing it might be wiser if I

did take a snapshot before every

apt-get upgrade, and I have a friend who does just that, but even when I ve run unstable I ve never had a machine get itself into a state that I couldn t recover so I haven t spent time investigating. I note Ubuntu has

apt-btrfs-snapshot but it doesn t seem to have any updates for years.

The other thing I didn t do when I installed my desktop is take advantage of subvolumes. I m still trying to get my head around exactly what I want them for, but they provide a partial replacement for LVM when it comes to carving up disk space. Instead of the separate

/ and

/home LVs I created I could have created a single LV that would have a single btrfs filesystem on it.

/ and

/home would then be separate subvolumes, allowing me to snapshot each individually. Quotas can also be applied separately so there s still the potential to prevent one subvolume taking all available space.

Encouraged by the lack of hassle with my desktop I decided to try moving my

sbuild machine over to use btrfs for its build chroots. For Reasons this is a VM kindly hosted by a friend, rather than something local. To be honest these days I would probably go for local hosting, but it works and there s no strong reason to move. The point is it s remote, and so if migrating went wrong and I had to ask for assistance I d be bothering someone who s doing me a favour as it is.

The build VM is, of course, running LVM, and there was luckily some free space available. I m reasonably sure the underlying storage involves spinning rust, so I did a laborious set of

pvmove commands to make sure all the available space was at the start of the PV, and created a new btrfs volume there. I was advised that while

btrfs-convert would do the job it was better to create a fresh filesystem where possible. This time I did create an initial

root subvolume.

Configuring up sbuild was then much simpler than I d expected. My setup originally started out as a set of tarballs for the chroots that would get untarred + used for the builds, which is pretty slow. Once overlayfs was mature enough I switched to that. I d had a conversation with Enrico about his

nspawn/btrfs setup, but it turned out Russ Allbery had written an excellent set of instructions on

sbuild with btrfs. I tweaked my existing setup based on his details, and I was in business. Each chroot is a separate subvolume - I don t actually end up having to mount them individually, but it means that only the chroot in use gets snapshotted. For example during a build the following can be observed:

# btrfs subvolume list /

ID 257 gen 111534 top level 5 path root

ID 271 gen 111525 top level 257 path srv/chroot/unstable-amd64-sbuild

ID 275 gen 27873 top level 257 path srv/chroot/bullseye-amd64-sbuild

ID 276 gen 27873 top level 257 path srv/chroot/buster-amd64-sbuild

ID 343 gen 111533 top level 257 path srv/chroot/snapshots/unstable-amd64-sbuild-328059a0-e74b-4d9f-be70-24b59ccba121

I was a little confused about whether I d got something wrong because the snapshot top level is listed as

257 rather than

271, but digging further with

btrfs subvolume show on the 2 mounted directories correctly showed the snapshot had a parent equal to the chroot, not

/.

As a final step I ran

jdupes via

jdupes -1Br / to deduplicate things across the filesystem. It didn t end up providing a significant saving unfortunately - I guess there s a reasonable amount of change between Debian releases - but I think tried it on my desktop, which tends to have a large number of similar source trees checked out. There I managed to save about 5% on

/home, which didn t seem too shabby.

The sbuild setup has been in place for a couple of months now, and I ve run quite a few builds on it while preparing for the freeze. So I m fairly confident in the stability of the setup and my next move is to transition my local house server over to btrfs for its containers (which all run under

systemd-nspawn). Those are generally running a Debian stable base so there should be a decent amount of commonality for deduping.

I m not saying I m yet at the point where I ll default to btrfs on new installs, but I m definitely looking at it for situations where I think I can get benefits from deduplication, or being able to divide up disk space without hard partitioning space.

(And, just to answer the worry I had when I started, I ve got nowhere near

ENOSPC problems, but I believe they re handled much more gracefully these days. And my experience of ZFS when it got above 90% utilization was far from ideal too.)

About a week back Jio launched a laptop called JioBook that will be manufactured in China

About a week back Jio launched a laptop called JioBook that will be manufactured in China

Unlike the Americans who chose the path to have more competition, we have chosen the path to have more monopolies. So even though, I very much liked Louis es project sooner or later finding the devices itself would be hard. While the recent notification is for laptops, what stops them from doing the same with mobiles or even desktop systems. As it is, both smartphones as well as desktop systems has been contracting since last year as food inflation has gone up.

Add to that availability of products has been made scarce (whether by design or not, unknown.) The end result, the latest processor launched overseas becomes the new thing here 3-4 years later. And that was before this notification. This will only decrease competition and make Ambanis rich at cost of everyone else. So much for east of doing business . Also the backlash has been pretty much been tepid. So what I shared will probably happen again sooner or later.

The only interesting thing is that it s based on Android, probably in part due to the issues people seeing in both Windows 10, 11 and whatnot.

Till later.

Update :- The print tried a decluttering but instead cluttered the topic. While what he shared all was true, and certainly it is a step backwards but he didn t need to show how most Indians had to go to RBI for the same. I remember my Mamaji doing the same and sharing afterwards that all he had was $100 for a day which while being a big sum was paltry if you were staying in a hotel and were there for company business. He survived on bananas and whatver cheap veg. he could find then. This is almost 35-40 odd years ago. As shared the Govt. has been doing missteps for quite sometime now. The print does try to take a balanced take so it doesn t run counter of the Government but even it knows that this is a bad take. The whole thing about security is just laughable, did they wake up after 9 years. And now in its own wisdom it apparently has shifted the ban instead from now to 3 months afterwards. Of course, most people on the right just applauding without understanding the complexities and implications of the same. Vendors like Samsung and Apple who have made assembly operations would do a double-think and shift to Taiwan, Vietnam, Mexico anywhere. Global money follows global trends. And such missteps do not help

Unlike the Americans who chose the path to have more competition, we have chosen the path to have more monopolies. So even though, I very much liked Louis es project sooner or later finding the devices itself would be hard. While the recent notification is for laptops, what stops them from doing the same with mobiles or even desktop systems. As it is, both smartphones as well as desktop systems has been contracting since last year as food inflation has gone up.

Add to that availability of products has been made scarce (whether by design or not, unknown.) The end result, the latest processor launched overseas becomes the new thing here 3-4 years later. And that was before this notification. This will only decrease competition and make Ambanis rich at cost of everyone else. So much for east of doing business . Also the backlash has been pretty much been tepid. So what I shared will probably happen again sooner or later.

The only interesting thing is that it s based on Android, probably in part due to the issues people seeing in both Windows 10, 11 and whatnot.

Till later.

Update :- The print tried a decluttering but instead cluttered the topic. While what he shared all was true, and certainly it is a step backwards but he didn t need to show how most Indians had to go to RBI for the same. I remember my Mamaji doing the same and sharing afterwards that all he had was $100 for a day which while being a big sum was paltry if you were staying in a hotel and were there for company business. He survived on bananas and whatver cheap veg. he could find then. This is almost 35-40 odd years ago. As shared the Govt. has been doing missteps for quite sometime now. The print does try to take a balanced take so it doesn t run counter of the Government but even it knows that this is a bad take. The whole thing about security is just laughable, did they wake up after 9 years. And now in its own wisdom it apparently has shifted the ban instead from now to 3 months afterwards. Of course, most people on the right just applauding without understanding the complexities and implications of the same. Vendors like Samsung and Apple who have made assembly operations would do a double-think and shift to Taiwan, Vietnam, Mexico anywhere. Global money follows global trends. And such missteps do not help

Unlike the Americans who chose the path to have more competition, we have chosen the path to have more monopolies. So even though, I very much liked Louis es project sooner or later finding the devices itself would be hard. While the recent notification is for laptops, what stops them from doing the same with mobiles or even desktop systems. As it is, both smartphones as well as desktop systems has been contracting since last year as food inflation has gone up.

Add to that availability of products has been made scarce (whether by design or not, unknown.) The end result, the latest processor launched overseas becomes the new thing here 3-4 years later. And that was before this notification. This will only decrease competition and make Ambanis rich at cost of everyone else. So much for east of doing business . Also the backlash has been pretty much been tepid. So what I shared will probably happen again sooner or later.

The only interesting thing is that it s based on Android, probably in part due to the issues people seeing in both Windows 10, 11 and whatnot.

Till later.

Update :- The print tried a decluttering but instead cluttered the topic. While what he shared all was true, and certainly it is a step backwards but he didn t need to show how most Indians had to go to RBI for the same. I remember my Mamaji doing the same and sharing afterwards that all he had was $100 for a day which while being a big sum was paltry if you were staying in a hotel and were there for company business. He survived on bananas and whatver cheap veg. he could find then. This is almost 35-40 odd years ago. As shared the Govt. has been doing missteps for quite sometime now. The print does try to take a balanced take so it doesn t run counter of the Government but even it knows that this is a bad take. The whole thing about security is just laughable, did they wake up after 9 years. And now in its own wisdom it apparently has shifted the ban instead from now to 3 months afterwards. Of course, most people on the right just applauding without understanding the complexities and implications of the same. Vendors like Samsung and Apple who have made assembly operations would do a double-think and shift to Taiwan, Vietnam, Mexico anywhere. Global money follows global trends. And such missteps do not help

Unlike the Americans who chose the path to have more competition, we have chosen the path to have more monopolies. So even though, I very much liked Louis es project sooner or later finding the devices itself would be hard. While the recent notification is for laptops, what stops them from doing the same with mobiles or even desktop systems. As it is, both smartphones as well as desktop systems has been contracting since last year as food inflation has gone up.

Add to that availability of products has been made scarce (whether by design or not, unknown.) The end result, the latest processor launched overseas becomes the new thing here 3-4 years later. And that was before this notification. This will only decrease competition and make Ambanis rich at cost of everyone else. So much for east of doing business . Also the backlash has been pretty much been tepid. So what I shared will probably happen again sooner or later.

The only interesting thing is that it s based on Android, probably in part due to the issues people seeing in both Windows 10, 11 and whatnot.

Till later.

Update :- The print tried a decluttering but instead cluttered the topic. While what he shared all was true, and certainly it is a step backwards but he didn t need to show how most Indians had to go to RBI for the same. I remember my Mamaji doing the same and sharing afterwards that all he had was $100 for a day which while being a big sum was paltry if you were staying in a hotel and were there for company business. He survived on bananas and whatver cheap veg. he could find then. This is almost 35-40 odd years ago. As shared the Govt. has been doing missteps for quite sometime now. The print does try to take a balanced take so it doesn t run counter of the Government but even it knows that this is a bad take. The whole thing about security is just laughable, did they wake up after 9 years. And now in its own wisdom it apparently has shifted the ban instead from now to 3 months afterwards. Of course, most people on the right just applauding without understanding the complexities and implications of the same. Vendors like Samsung and Apple who have made assembly operations would do a double-think and shift to Taiwan, Vietnam, Mexico anywhere. Global money follows global trends. And such missteps do not help

Last night I attended the first local Linux User Group talk since before the pandemic (possibly even long before the pandemic!)

Topic: How and why Atomic Access runs Debian on a 100Gbps router

Speaker: Joe Botha

This is the first time CLUG used

Last night I attended the first local Linux User Group talk since before the pandemic (possibly even long before the pandemic!)

Topic: How and why Atomic Access runs Debian on a 100Gbps router

Speaker: Joe Botha

This is the first time CLUG used

When I travel for a few days I don t usually

When I travel for a few days I don t usually For the soles I may have gone a bit overboard with the vibram claw, but:

For the soles I may have gone a bit overboard with the vibram claw, but:

As for the finished weight, at 235 g for the pair I thought I could do better, but apparently shoes are considered ultralight if they are around 500 g? Using just one layer of mesh rather than two would probably help, but it would have required a few changes to the pattern, and anyway I don t really to carry them around all day.

As for the finished weight, at 235 g for the pair I thought I could do better, but apparently shoes are considered ultralight if they are around 500 g? Using just one layer of mesh rather than two would probably help, but it would have required a few changes to the pattern, and anyway I don t really to carry them around all day.

I ve also added a loop of fabric (polycotton) to the centre back to be able to hang the slippers to the backpack when wet or dirty; a bit of narrow webbing may have been better, but I didn t have any in my stash.

The

I ve also added a loop of fabric (polycotton) to the centre back to be able to hang the slippers to the backpack when wet or dirty; a bit of narrow webbing may have been better, but I didn t have any in my stash.

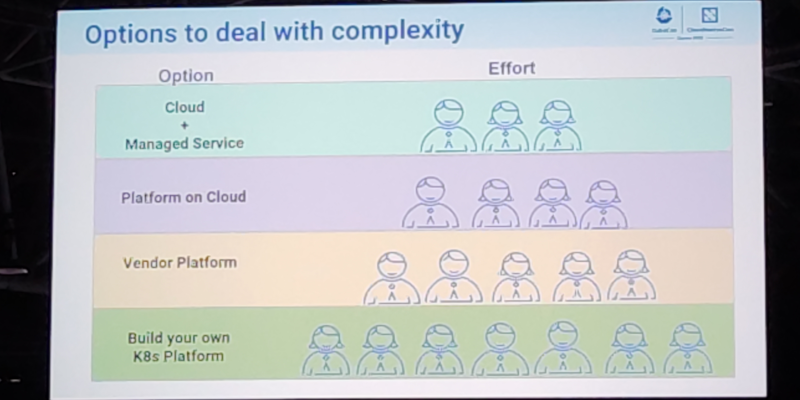

The  This post serves as a report from my attendance to Kubecon and CloudNativeCon 2023 Europe that took place in

Amsterdam in April 2023. It was my second time physically attending this conference, the first one was in

Austin, Texas (USA) in 2017. I also attended once in a virtual fashion.

The content here is mostly generated for the sake of my own recollection and learnings, and is written from

the notes I took during the event.

The very first session was the opening keynote, which reunited the whole crowd to bootstrap the event and

share the excitement about the days ahead. Some astonishing numbers were announced: there were more than

10.000 people attending, and apparently it could confidently be said that it was the largest open source

technology conference taking place in Europe in recent times.

It was also communicated that the next couple iteration of the event will be run in China in September 2023

and Paris in March 2024.

More numbers, the CNCF was hosting about 159 projects, involving 1300 maintainers and about 200.000

contributors. The cloud-native community is ever-increasing, and there seems to be a strong trend in the

industry for cloud-native technology adoption and all-things related to PaaS and IaaS.

The event program had different tracks, and in each one there was an interesting mix of low-level and higher

level talks for a variety of audience. On many occasions I found that reading the talk title alone was not

enough to know in advance if a talk was a 101 kind of thing or for experienced engineers. But unlike in

previous editions, I didn t have the feeling that the purpose of the conference was to try selling me

anything. Obviously, speakers would make sure to mention, or highlight in a subtle way, the involvement of a

given company in a given solution or piece of the ecosystem. But it was non-invasive and fair enough for me.

On a different note, I found the breakout rooms to be often small. I think there were only a couple of rooms

that could accommodate more than 500 people, which is a fairly small allowance for 10k attendees. I realized

with frustration that the more interesting talks were immediately fully booked, with people waiting in line

some 45 minutes before the session time. Because of this, I missed a few important sessions that I ll

hopefully watch online later.

Finally, on a more technical side, I ve learned many things, that instead of grouping by session I ll group

by topic, given how some subjects were mentioned in several talks.

On gitops and CI/CD pipelines

Most of the mentions went to

This post serves as a report from my attendance to Kubecon and CloudNativeCon 2023 Europe that took place in

Amsterdam in April 2023. It was my second time physically attending this conference, the first one was in

Austin, Texas (USA) in 2017. I also attended once in a virtual fashion.

The content here is mostly generated for the sake of my own recollection and learnings, and is written from

the notes I took during the event.

The very first session was the opening keynote, which reunited the whole crowd to bootstrap the event and

share the excitement about the days ahead. Some astonishing numbers were announced: there were more than

10.000 people attending, and apparently it could confidently be said that it was the largest open source

technology conference taking place in Europe in recent times.

It was also communicated that the next couple iteration of the event will be run in China in September 2023

and Paris in March 2024.

More numbers, the CNCF was hosting about 159 projects, involving 1300 maintainers and about 200.000

contributors. The cloud-native community is ever-increasing, and there seems to be a strong trend in the

industry for cloud-native technology adoption and all-things related to PaaS and IaaS.

The event program had different tracks, and in each one there was an interesting mix of low-level and higher

level talks for a variety of audience. On many occasions I found that reading the talk title alone was not

enough to know in advance if a talk was a 101 kind of thing or for experienced engineers. But unlike in

previous editions, I didn t have the feeling that the purpose of the conference was to try selling me

anything. Obviously, speakers would make sure to mention, or highlight in a subtle way, the involvement of a

given company in a given solution or piece of the ecosystem. But it was non-invasive and fair enough for me.

On a different note, I found the breakout rooms to be often small. I think there were only a couple of rooms

that could accommodate more than 500 people, which is a fairly small allowance for 10k attendees. I realized

with frustration that the more interesting talks were immediately fully booked, with people waiting in line

some 45 minutes before the session time. Because of this, I missed a few important sessions that I ll

hopefully watch online later.

Finally, on a more technical side, I ve learned many things, that instead of grouping by session I ll group

by topic, given how some subjects were mentioned in several talks.

On gitops and CI/CD pipelines

Most of the mentions went to  On etcd, performance and resource management

I attended a talk focused on etcd performance tuning that was very encouraging. They were basically talking

about the

On etcd, performance and resource management

I attended a talk focused on etcd performance tuning that was very encouraging. They were basically talking

about the  On jobs

I attended a couple of talks that were related to HPC/grid-like usages of Kubernetes. I was truly impressed

by some folks out there who were using Kubernetes Jobs on massive scales, such as to train machine learning

models and other fancy AI projects.

It is acknowledged in the community that the early implementation of things like Jobs and CronJobs had some

limitations that are now gone, or at least greatly improved. Some new functionalities have been added as

well. Indexed Jobs, for example, enables each Job to have a number (index) and process a chunk of a larger

batch of data based on that index. It would allow for full grid-like features like sequential (or again,

indexed) processing, coordination between Job and more graceful Job restarts. My first reaction was: Is that

something we would like to enable in

On jobs

I attended a couple of talks that were related to HPC/grid-like usages of Kubernetes. I was truly impressed

by some folks out there who were using Kubernetes Jobs on massive scales, such as to train machine learning

models and other fancy AI projects.

It is acknowledged in the community that the early implementation of things like Jobs and CronJobs had some

limitations that are now gone, or at least greatly improved. Some new functionalities have been added as

well. Indexed Jobs, for example, enables each Job to have a number (index) and process a chunk of a larger

batch of data based on that index. It would allow for full grid-like features like sequential (or again,

indexed) processing, coordination between Job and more graceful Job restarts. My first reaction was: Is that

something we would like to enable in  I wrote the

I wrote the  Wrong sort of shim, but canned language bindings would be handy

Wrong sort of shim, but canned language bindings would be handy

This is what I imagine it looks like inside these libraries

This is what I imagine it looks like inside these libraries

(TL;DR: I ve been trying out btrfs in some places instead of ext4, I ve hit absolutely zero issues and there are a few features that make me plan to use it more.)

Despite (or perhaps because of) working on storage products for a reasonable chunk of my career I have tended towards a conservative approach to my filesystems. By the time I came to Linux

(TL;DR: I ve been trying out btrfs in some places instead of ext4, I ve hit absolutely zero issues and there are a few features that make me plan to use it more.)

Despite (or perhaps because of) working on storage products for a reasonable chunk of my career I have tended towards a conservative approach to my filesystems. By the time I came to Linux

Given that

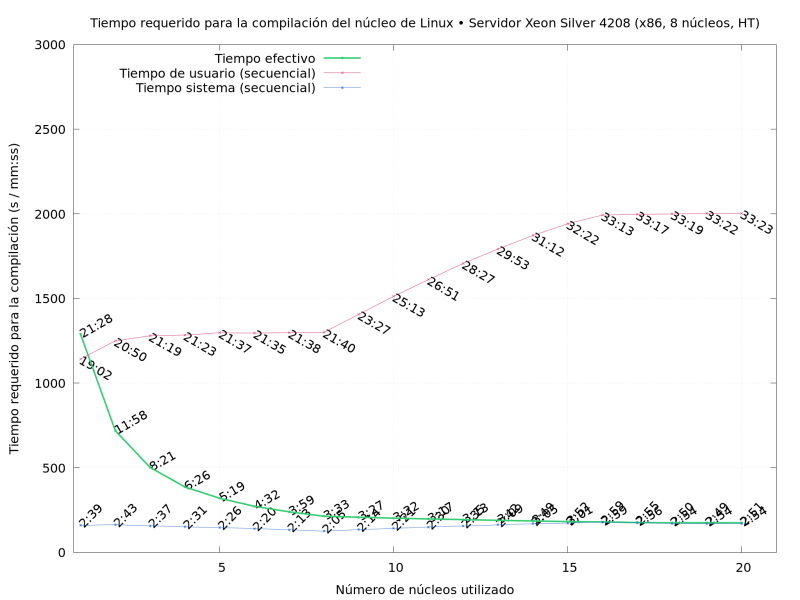

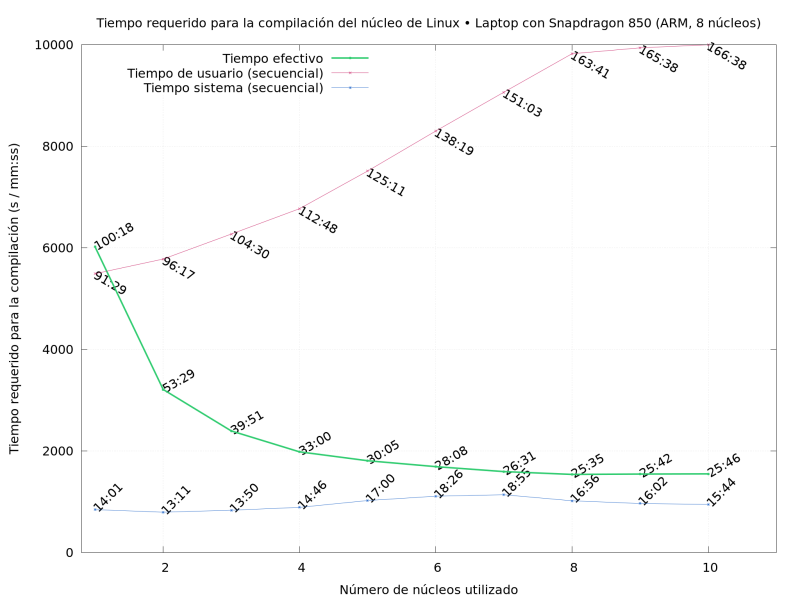

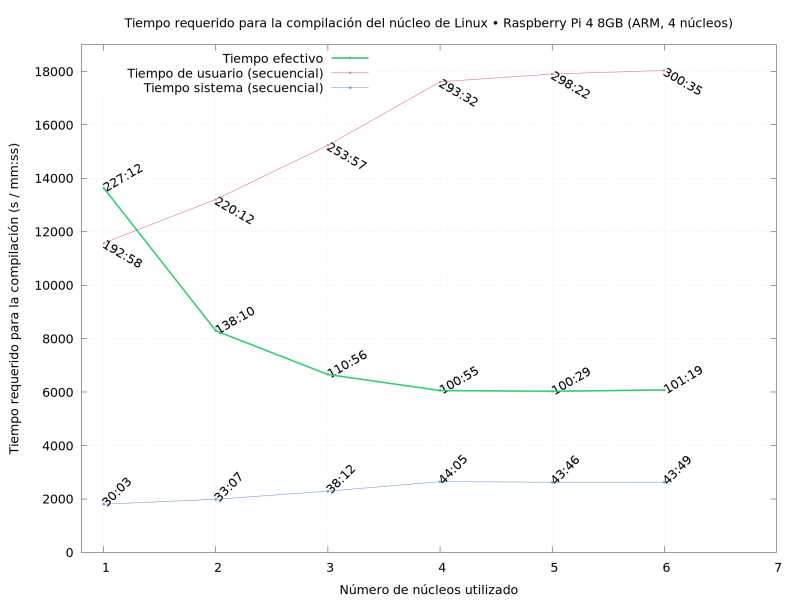

Given that  So What did I do? I compiled Linux repeatedly, on several of the

machines I had available, varying the

So What did I do? I compiled Linux repeatedly, on several of the

machines I had available, varying the

A few years ago a friend told me that her usual fabric shop was closing down and had a sale on all remaining stock.

While being sad for yet another brick and mortar shop that was going to be missed (at least it was because the owners were retiring, not because it wasn t sustainable anymore), of course I couldn t miss the opportunity.

So we drove a few hundred km, had some nice time with a friend that (because of said few hundred km) we rarely see, and spent a few hours looting the corps er helping the shop owner getting rid of stock before their retirement.

A few years ago a friend told me that her usual fabric shop was closing down and had a sale on all remaining stock.

While being sad for yet another brick and mortar shop that was going to be missed (at least it was because the owners were retiring, not because it wasn t sustainable anymore), of course I couldn t miss the opportunity.

So we drove a few hundred km, had some nice time with a friend that (because of said few hundred km) we rarely see, and spent a few hours looting the corps er helping the shop owner getting rid of stock before their retirement.

Among other things there was a cut of lightweight swiss embroidery cotton in blue which may or may not have been enthusiastically grabbed with plans of victorian underwear.

It was too nice to be buried under layers and layers of fabric (and I suspect that the embroidery wouldn t feel great directly on the skin under a corset), so the natural fit was something at the corset cover layer, and the fabric was enough for a combination garment of the kind often worn in the later Victorian age to prevent the accumulation of bulk at the waist.

It also has the nice advantage that in this time of corrupted morals it is perfectly suitable as outerwear as a nice summer dress.

Then life happened, the fabric remained in my stash for a long while, but finally this year I have a good late victorian block that I can adapt, and with spring coming it was a good time to start working on the summer wardrobe.

Among other things there was a cut of lightweight swiss embroidery cotton in blue which may or may not have been enthusiastically grabbed with plans of victorian underwear.

It was too nice to be buried under layers and layers of fabric (and I suspect that the embroidery wouldn t feel great directly on the skin under a corset), so the natural fit was something at the corset cover layer, and the fabric was enough for a combination garment of the kind often worn in the later Victorian age to prevent the accumulation of bulk at the waist.

It also has the nice advantage that in this time of corrupted morals it is perfectly suitable as outerwear as a nice summer dress.

Then life happened, the fabric remained in my stash for a long while, but finally this year I have a good late victorian block that I can adapt, and with spring coming it was a good time to start working on the summer wardrobe.

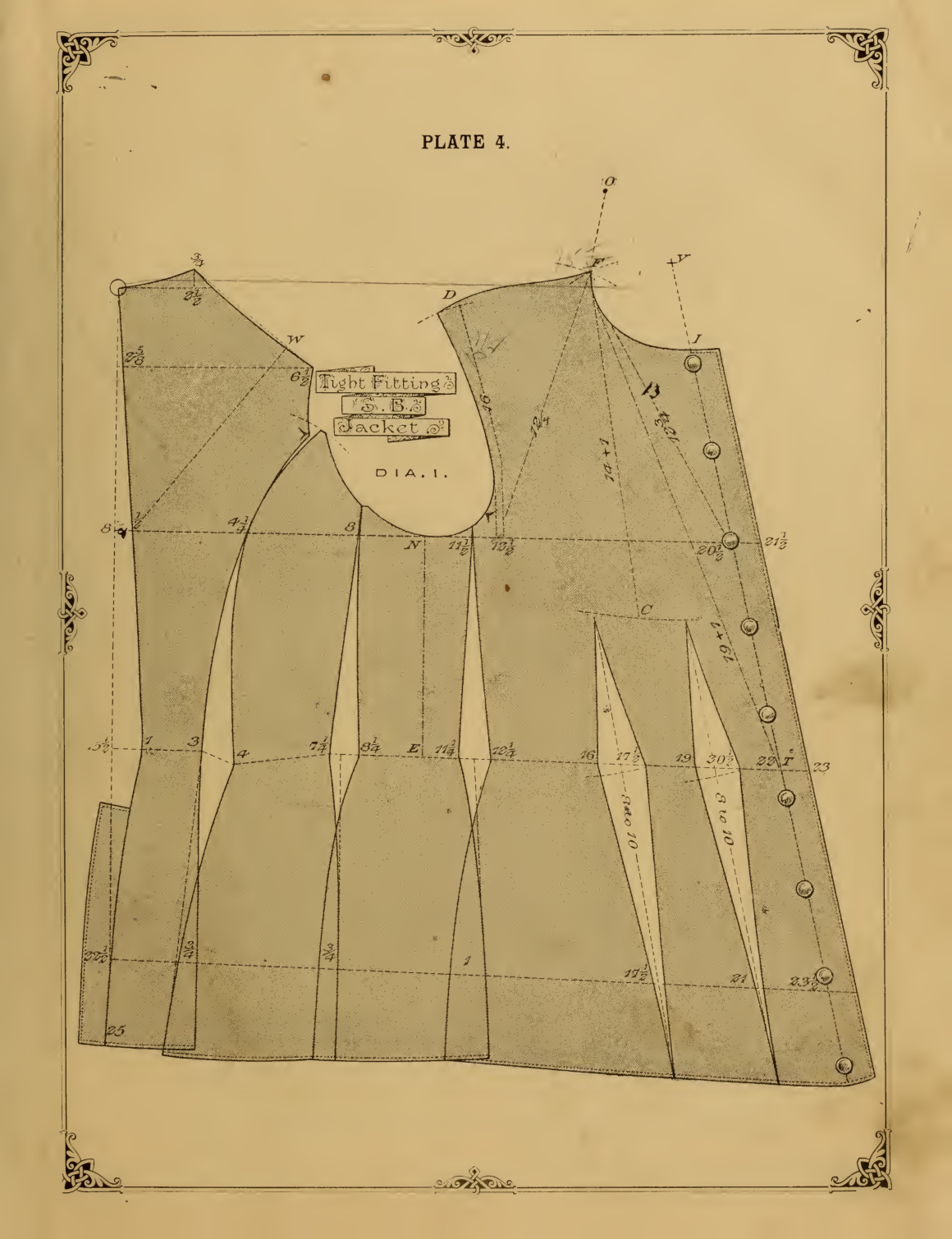

The block I ve used comes from

The block I ve used comes from  The only design choice left was the pocket situation: I wanted to wear this garment both as underwear (where pockets aren t needed, and add unwanted bulk) and outerwear (where no pockets is not an option), and the fabric felt too thin to support the weight of the contents of a full pocket. So I decided to add slits into the seams, with just a modesty placket, and wear

The only design choice left was the pocket situation: I wanted to wear this garment both as underwear (where pockets aren t needed, and add unwanted bulk) and outerwear (where no pockets is not an option), and the fabric felt too thin to support the weight of the contents of a full pocket. So I decided to add slits into the seams, with just a modesty placket, and wear  First I had to finish attaching the ruffles, however, and this is when I cursed myself for not using the ruffler foot I have (it would have meant not having selvedges on all seams of the ruffle), and for pleating the ruffle rather than gathering it (I prefer the look of handsewn gathers, but here I m sewing everything by machine, and that s faster, right? (it probably wasn t)).

First I had to finish attaching the ruffles, however, and this is when I cursed myself for not using the ruffler foot I have (it would have meant not having selvedges on all seams of the ruffle), and for pleating the ruffle rather than gathering it (I prefer the look of handsewn gathers, but here I m sewing everything by machine, and that s faster, right? (it probably wasn t)).

Also, this is where I started to get low on pins, and I had to use the ones from the vintage

Also, this is where I started to get low on pins, and I had to use the ones from the vintage Except, maybe I shouldn t carry heavy items in my pockets when doing it? Oh, well.

I have other plans for the same pattern, but they involve making some crochet lace, so I expect I can aim at making them wearable in summer 2024.

Now I just have to wait for the weather to be a bit warmer, and then I can start enjoing this one.

Except, maybe I shouldn t carry heavy items in my pockets when doing it? Oh, well.

I have other plans for the same pattern, but they involve making some crochet lace, so I expect I can aim at making them wearable in summer 2024.

Now I just have to wait for the weather to be a bit warmer, and then I can start enjoing this one.

On Tue 13 July 2021 there was a

On Tue 13 July 2021 there was a